Check out the conversation on Apple, Spotify and YouTube.

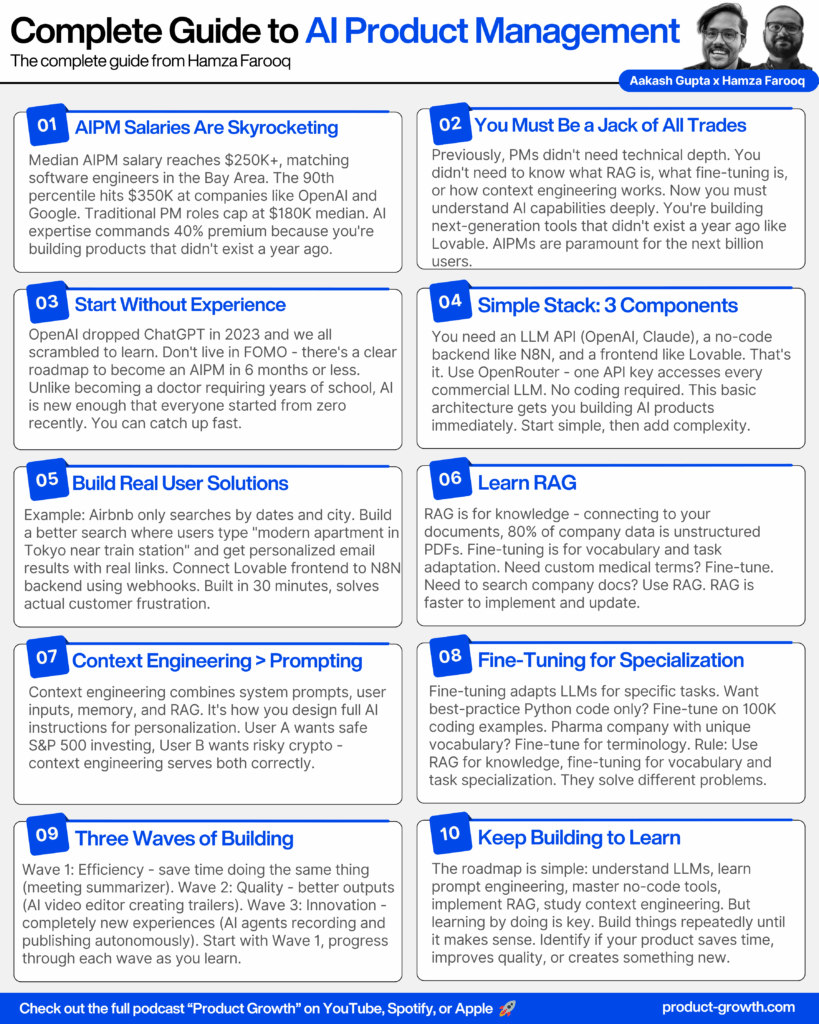

A comprehensive guide to becoming an AI Product Manager from Hamza Farooq, who’s worked with Home Depot, TripAdvisor, and Jack in the Box on their AI products. Learn why AIPM salaries are skyrocketing to match software engineering compensation, the exact 6-month roadmap to transition into the role, and follow along as we build a working AI product from scratch in 30 minutes using no-code tools.

Here is the transcript:

Introduction (00:00:00 – 00:01:15)

Aakash: There’s a lot of hype on AI, but it’s actually the opposite when it comes to AIPM roles. Look at the median total comp that is available for them. The median salary for AIPM is skyrocketing. Hamza Farooq, an expert who has worked with companies like Home Depot, TripAdvisor, and Jack in the Box on their AI is gonna give you all of the sauce for free.

Hamza: Previously, you didn’t need to know the technical details, you didn’t need to know what RAG is, you didn’t need to know what fine tuning is, you don’t need to know how context engineering works. You have to be a jack of all trades. You need to know exactly what AI can do.

Aakash: If they want to go from here, what else is there in the 6 month roadmap to go from no experience to PM at OpenAI or Anthropic?

Hamza: And within 30 minutes, we were able to build lovable, connected to N8N and have RAG working right in front of us. In today’s episode, we’re going to teach you everything from prototyping, RAG, and agents, all how to become an AIPM.

Is AIPM Real or Just Hype?

Aakash: Hamza, welcome to the podcast. So the topic today is AI product management, and where I wanna start is: is AI product management all hype? A lot of people have been telling me this role doesn’t even exist. A lot of product managers have been saying people are talking too much about AIPM. Is it real?

Hamza: Well, I’ll tell you one thing, there’s a lot of hype on AI, but it’s actually the opposite when it comes to AIPM roles, because who’s gonna make them into real products, into real systems, so that users can associate with that? And I just want to show you one screen, just one detail to show you the growth that we have seen for the requirement of AIPMs across the board. It’s just because these things are a need for today. You need to learn all these things so that you can build products so users can go beyond using ChatGPT.

The data speaks for itself. Looking at median total compensation, AIPM salaries are skyrocketing. This is only second to software engineers or even exactly where the software engineers are right now in the Bay Area. OpenAI and Google are obviously playing at the 90th percentile, with Oracle paying at the 10th percentile. Overall, AIPM is a highly lucrative field.

Why AIPMs Command Premium Salaries

Aakash: Why do you think the AIPMs are paid so well?

Hamza: Well, it’s basically not just a PM role anymore. That’s the reason behind it. You have to be a jack of all trades. You need to know exactly what AI can do. Previously, you didn’t need to know the technical details. You didn’t need to know what RAG is. You didn’t need to know what fine tuning is. You don’t need to know how context engineering works.

What we’re seeing is that the AIPM is needed to understand, to build the next generation of tools that did not even exist a year ago. Lovable is a product that we didn’t even imagine about like a year ago or maybe a little more than a year ago. Who would have thought that you could just build websites just like that? Your AIPM role is paramount to make this available for the next billion users.

Aakash: It’s really thinking ahead about what are going to be the next generation of products. These are going to be AI enabled products. Therefore we need PMs who deeply understand AI to build them.

Hamza: Exactly, because if you don’t know what AI can do for you, you are stuck in going all about what GPT is doing, what OpenAI is coming up with. You have to think about, I’m an e-commerce company. How can I use AI to get what’s best for my customers? That’s what you need to know what AI can do for you.

Can You Become an AIPM Without Experience?

Aakash: Does any of the content around AIPM really matter if you don’t have AI experience? Can somebody without experience become an AIPM?

Hamza: Well, that’s what people have done in the past couple of years. Like, let’s say if you want to become a doctor, you have to go through school, you have to have learning, you have training. Well, you know, to be honest, OpenAI just dropped GPT to us in 2023, and we all had to scramble and learn. And that’s the biggest thing that we tell people: don’t live in FOMO. There is a roadmap. There is stuff, there is training that you can achieve, assimilate, and actually become an AIPM in like 6 months or less.

Aakash: Amazing. So what are the key skills? What’s the 6 month roadmap to become an AIPM?

The Essential AIPM Architecture: LLM, Backend, Frontend

Hamza: Well, I think the most important thing is to first identify the tools that you need to learn to get started. And if you look at the slides, this slide seems a little daunting, like, oh my God, I have to learn so many things. But honestly, it’s how you use them, and you don’t have to use every single one of them.

Let me draw a very basic architecture design for you, the simplest that should get you started. You need to know how to use an LLM as an API endpoint. So you have GPT available to you. You have ChatGPT available to you as an application. They all have API endpoints, so you need to have an API connection or be able to understand how to use an API, which is almost literally getting a key and you’re ready to go.

From that, you need a backend ecosystem, for example, N8N. N8N is this magical ecosystem which is completely no code. You don’t need to write a single line of code, and this amazing tool gets you up and running with the backend of the engine that becomes your AI product.

And then like any other tool you need to build, you need to have a front end or the main landing screen where your users will communicate with you. So if you go here, you basically want a front end, and we use something like Lovable to build a front end. And again, this is a no code tool. LLM, backend, frontend, pretty simple architecture.

Building a Real AI Product: The Airbnb Search Demo

Hamza: Now let me show you a simple example. Aakash, I’m gonna ask you: do you use Airbnb?

Aakash: Of course, right? I am the biggest fan of Airbnb. I’m their platinum level if they had a tier, I’m the platinum tier.

Hamza: Now the one thing that I hate about Airbnb is actually I cannot search anything beyond the dates and a city. I literally cannot do anything beyond that. So I built this unofficial version of Airbnb, and I really want to show you guys an example of this.

For instance, I want to stay in a modern apartment in Tokyo near the train station, looking from the 25th of November to the 29th of November. All I need to do is just enter my name and my email, and what this tool will do now is it will connect. So this is the front end which is built on Lovable. This is the back end which has been built on N8N.

It will receive the request from the user, and it will call something which is called MCP. And before you worry about what is MCP and what are these things, all I want you to worry about is everything over here is built with no code. All you need to do is build things in the simplest form, and once you have this thing ready, this workflow gets activated.

And once this workflow is activated for you, it will generate a response. It will run behind the scenes, and then it will generate the listings for you that you can find in Tokyo. Once it has discovered all of them, it will send you an email saying, hey, I have found the Airbnbs that are most relevant to what you are looking for.

Aakash: So imagine we identify a customer need. We said this customer, we as customers would like better search capability and we are able to get that available to us. And when I open my email over here, I have the date. It picked up the dates, it picked up locations. It tells you why it’s a good location for you. It’s like ChatGPT natural language concierge for Airbnb, right? And then if you click on a link, it’s actually the right link. It’s a live link.

Step-by-Step: Building Your First AI Product with N8N

Aakash: OK, so we’ve seen the end product. Can you walk us step by step how we would build this ourselves?

Hamza: Absolutely. So there are, as I said, three major components to it: there’s an LLM API, there’s a backend where you build N8N, and there is a Lovable front end which you have to work on. So in order to get started, let’s just say we want to start with building the backend API.

The best thing over here is that I have finally gotten to writing comments and everything is available for you. This is what N8N looks like over here. You have N8N set up. You’re like, how do I know I need all these components? Well, it’s actually pretty easy. Once you start designing the ecosystem, you’re like, OK, there’s an Airbnb. I need to build an MCP connector. The job of the MCP connector over here is to take your request and be able to connect to Airbnb app and pull information from them.

Starting with the Basics: Webhooks and Chat Triggers

Can we build this from scratch? Well, yes, you can, but in order for you to make a lasagna, you should know how to bake something simple. And in order to get us started and not get overwhelmed, let’s start with the most basic building steps.

The first basic step of using Lovable and N8N together is a webhook. A webhook is this magical thing that allows you to communicate across different tools. If you go to our GitHub, I’ve created a roadmap on how to get started. The most basic one is start here, which is literally just a couple of nodes.

What I wanna do is build something that’s able to connect to Lovable and run on N8N at the same time. The basic version of this is that there is a webhook, which is your front end, you have a webhook which communicates with N8N and is able to respond back and forth in real time for you.

The first thing we’ll do is click on the webhook over here. This is the best thing: there’s so many examples that are already created that we don’t have to worry about how do I do everything from scratch. This is the most basic way to get started. There’s a JSON file, you copy the JSON file and you come to N8N. You can paste the whole thing and it’s ready to go.

Adding AI Agents and Memory

I’m gonna keep it as simple as possible. How do you get the minimal thing to work for you? You want to add something which is called a chat trigger. A trigger is basically the way you want an agent to start talking to you. It’s what you call to have your agent converse with your ecosystem.

We bring this here, we connect this to our AI agent, and over here we just want to mention that this is connected to a chat trigger. There’s a chat model associated with that. There’s an open router here. We are using the DeepSeek model. The best thing about open router is that you have access to every single LLM that you can think of—it’s available for you.

When you open chat, you can say, “How are you?” And it responds, “I’m just a virtual agent. I don’t have feelings, but I’m happy to help you with whatever you need.”

Now, you’re like, Hamza, this is so basic. Why is this important to me? I’ll tell you why. We have an AI agent, we’ve added memory. So I can say, “Hey, I am Hamza and I like chocolate ice cream.” And then later ask, “Can you tell me something about me?”

You see, N8N is so intuitive and it is so powerful that just using 1, 2, 3, 4 nodes, it’s starting to remember who you are. And if there’s an AI agent, you can connect to any LLM that exists through Open Router.

Choosing the Right LLM for Your Project

Aakash: What are the rules of thumb or mental things you think about when you’re trying to choose an LLM?

Hamza: Number one is that you wanna start with the usual suspects. You wanna pick up OpenAI, you wanna pick up Claude, you can pick up DeepSeek. They’re all great ones. You don’t wanna go into something which you’ve never heard of. So rule of thumb: pick the ones that you’re familiar with, they’re gonna perform the best, they’re gonna be mostly available, and they’re gonna produce the results that you expect.

I definitely wanna tell people to start using Open Router because from one key, using one key of Open Router, you can literally select any LLM that is commercially or freely available. One key to rule them all is sort of the name of the game over here.

Connecting to the Outside World with Webhooks

Hamza: So here what we have is something which is contained within. Everything over here is contained within, there is nothing which is going to the outside world. So how do we get this to interact with the outside world? That’s where things become a little interesting.

We now introduce a new tool which we call webhooks, and what webhooks do is they connect you to the outside world. Instead of using chat, you connect using webhook, and you can remove your chat experience.

So now what we’re doing is that we’re adding 2 new variables or 2 new nodes. We have a webhook over here to listen in for incoming responses. And we have a webhook here which responds to whatever has been requested.

Simple enough: we have a webhook that will listen, a webhook which will respond, it will pass the information to the AI agent. The AI agent will listen to the conversation that has been sent, and then from there it will respond via the webhook. This is the simplest form, this is as basic as it gets in order to set it up.

Testing with Mock Data

What we do here is we can make a little bit of mock data that will make sure we are able to get the right information over here. We want to set up mock data and test out: are we able to get the results that we hope to get when we try to run this?

What happened just now is that you passed a request through a webhook. You set mock data on a webhook. Now the webhook has information, has a question which says “how many moons does Saturn have?” And what the LLM did is that it responded pretty much as we would see in the chat, but the difference over here is that this entire thing can now be connected to a Lovable front end.

Connecting Lovable to N8N

Hamza: Now what we’ll do is that we’ll go to our Lovable and I’m going to open Lovable. I’m going to write a prompt that says “please build me a finance agent chatbot which is connected to N8N post webhook URL, and the only body message which will be sent as a user query, and you will receive a response from the webhook.”

Simple, right? And then we let it do its job. Usually there’s a bit of tinkering that you need to do. It’s always interesting to see how results happen in real time, but what it’s doing right now is it’s gonna build a finance agent. I’ve given it the information about the webhook, which I’m very interested to see if it starts calling the webhook that we have created over here.

Making It Secure

Aakash: Is it gonna do this securely, like if we deploy this website, is it gonna properly hide this so other people can’t access it?

Hamza: Yeah, so what you can do is that you can add authentication. If you see on N8N, there is authentication options also. There’s basic auth, there’s header auth, and there’s JWT authentication. So you can build authentication also.

Let’s say Aakash, you sign in with your email, and you wanna make sure that your information is only relevant to you. That’s where you’re gonna pass the user ID as the authenticator or the auth key which is created from Lovable into your system.

This is like we right now are just scratching the surface. Right now all we’re trying to do is how to slice and dice our vegetables.

Testing the Connection

Coming back to Lovable, we have a finance agent that is alive. Now, usually, when you start working with it, what you wanna first see is: can it connect?

I’m gonna write, “Hey, can you hear me?” And what we want to do and make sure over here is that we have our N8N active, which means it is now listening for a request to connect from Lovable. So we come here and we say, “Hey, can you hear me?”

This is the moment of truth. What happens here is that it will go and let’s see what happens. It went over here and we see the same message in both places. Exactly. So it’s starting to hear.

The thing that we need to focus on is matching the webhook response or the request of webhook to how we’re seeing it coming to us. This is the only technical part that we need to know: when we have the execution part and we received a webhook, we’re trying to see what was the message that was sent to us.

If you look at this architecture design, this is the entire header. These are the parameters, and this is the query that was sent to us, and this is the message. So what we’re looking for is the webhook architecture which matches this. The only difference is that we essentially want to match the webhook architecture to the architecture that matches what we have received from our users.

Understanding RAG: Making AI Search Your Documents

Aakash: So the topic we need to all know about and learn a lot about is RAG. Can we learn about what RAG is and see that in action?

Hamza: Yes, absolutely. So let me start with the most basic question for you. How many documents—PDFs, presentations, things—how many documents do you think you have on your laptop right now?

Aakash: Thousands, tens of thousands.

Hamza: Right. Now let’s take it to the next level. Let’s talk about an organization with 10 people, 100 people, 1000 people. You have millions of documents, millions and millions of documents. It is estimated that 80% of all your data in an organization is unstructured, which is PDFs, presentations, memos, all of that is what’s stored over there.

How do you retrieve that information? How do I find out what was the latest numbers on our conversation with Client A? There is no way, unless you do a Control+F, find the relevant name of the document, open the document, read through it, and get to the right answer.

What RAG Does for You

What RAG has done for us in this day and age is that RAG can consume all our documents, store them, and you can literally search as if you’re searching on the internet or like you’re doing Google search. You can literally do a Google search on your own documents, and those documents now are available for you to use anywhere.

And not just you can ask questions about it, it will not return you the most relevant document. It will also return you a TLDR of: hey, based on my understanding of document A, B, and C, this is the latest update that has happened, or this is the latest update that you should know about about this particular customer.

That is the power of retrieval augmented generation or enterprise knowledge management as we know it. It is one of the fastest growing industries. Like agents are great, but there’s a great company with the name of Glean. Glean started out as a RAG company, and now they’ve introduced agents and other things, but that’s what the major bread and butter has been.

Implementing RAG in N8N

Hamza: Now, in order to make N8N use RAG, there are 2-3 ways that you can do it. The first way is you can basically connect to Supabase. There’s a Supabase vector store, there’s Supabase tools that you can add, and you will have to add documents to it, and you’ll have to design a bunch of things with that. Of course it takes a little bit of time, like it just takes a step by step process to do it.

However, in order to make something work live, let’s just try to make it happen in real time. So our company, we have a tool that we call Traversal Pro, and all you need to do in Traversal Pro is upload a document. It does all the work by itself. It will do everything for you.

Once you have logged in, we have these different toolkits, different projects. Let’s say you have knowledge repositories 1, 2, 3, 4, and what we would like to do is make sure that knowledge repository has only the most relevant documents that we want.

It’s almost like magic that we have this project management handbook over here, and what we can do from here is you can go to the playground. You can ask questions and it gets you to that answer, reading the document for you. It gives you grounded responses on where the answer came from, which part, which page and all of that. It shows you the chunks that it came up with the answer from.

Connecting RAG to N8N

Aakash: OK, Hamza, this is over here. How do I bring it to N8N? How do I go from here to N8N?

Hamza: It’s so easy. You go to an API key, you generate your API key. You save that API key somewhere. Now you’re like, Hamza, I don’t like to write code. Why are you making me write all this code? You don’t have to write any code. Here’s the best part: you don’t have to write any code at all.

You can copy this entire code. This is the code, and you come to N8N and you say, you know what, I want to connect to another API endpoint, so you select an HTTP request. You can just tell the HTTP request, if you could click on import cURL, you can paste the exact thing over there. It will figure out what it needs to do for you. And guess what? It built everything for you.

Here we have saved it. And what we can do is we can call it. Here I’m just gonna define what this tool does for me. We have added a RAG tool right here. The RAG tool is now connected to another backend API.

So N8N is this multifaceted tool—I’m gonna come back to the whiteboard—that can also enable you to connect to multiple API services. You’re not just using the capability of N8N’s orchestration, it has the ability to connect to multiple APIs outside and call that response for you in real time as you are building your product.

Let’s hope this works. I’m going to try to update this and say “you have access to a RAG tool to answer questions about product management.” Now I just saved that. I’m gonna try if it’s able to pick up. Usually it should work.

All right, so it gave you an answer. Now, what we wanna do is that we wanna see if it used the handbook. You can come to executions over here and you see the executions. If you look at the log here, it called the handbook right here. You see this chunk of text? It’s calling that request from there, from RAG tool, and then combining all the responses. It will look to the memory, then it will use the LLM and then it will use the LLM to generate a question for you.

Aakash: This is easier than I thought it was gonna be.

Hamza: Yeah, I mean, me too. For me, I’m happy that it’s working all in real time, right in front of you.

Context Engineering vs Prompt Engineering

Aakash: So RAG is a many tens of billions of dollar industry. People are also talking a lot about fine tuning and context engineering. What do AIPMs need to know about those?

Hamza: Awesome. Well, I’m gonna talk about context engineering, and context engineering for me is the most important thing that an AIPM should know. Usually what we do is that when you’re trying to talk to an LLM, we have a system prompt and we have a user prompt. These are the two things that we have available to us or we basically use them for our LLM to respond to us in the way we would like it to respond.

However, let’s just say Aakash, I want to make it personalized to you and personalized to me. Let’s say we have a finance agent which is listening to your conversation, and you’re like, you know what, I only wanna invest in S&P 500. I wanna do safe trading, I wanna do things that have historically been safe bets. So we wanna say Aakash wants to go after S&P 500.

Now, let’s just say I’m another person. I’m like, I’m very frivolous, and I’m like, I’m gonna go into crypto. Right, I’m like, you know what, I don’t make any money—ever since I quit Google, you realize how less money you make. So I’m gonna do or die or like all in.

How Context Engineering Works

So how do we make sure that you are getting the right information, like you are getting the right information, and I’m getting the right information that is relevant to me? And that’s where context engineering plays a role.

Here, what we do is that we have a system prompt and a user prompt. Then what we can do is we add memory, which are your past interactions and things that the LLM has learned about you and stored. So we call this the long-term memory. This is also a prompt.

And then we get information, relevant information from the RAG. You just saw the RAG was able to pull context and you were able to get an answer. That’s your RAG connector right there. So when you combine all these things, that’s your context engineering.

Prompt engineering is what you tell an LLM. Context engineering is how you design the instructions for your LLM. And that’s the beauty of having the knowledge of context engineering because it then makes your entire ecosystem dance. You can get personalization, you can get specific answers to what you’re looking for, and it understands each user based on that information.

Context engineering is extremely important, more important than prompt engineering now because you have to combine multiple levels of things at the same time to get to that answer in today’s world.

Understanding Fine Tuning

Hamza: The second thing that you’ve mentioned is fine tuning. Fine tuning is all about task adaptation. Usually when you have an LLM, the LLM can produce—you give it a prompt, and for every prompt there’s a response.

However, you want to say, hey, you know what, I don’t want this prompt or this LLM to produce general responses. I want the LLM to only produce Python code and the best practice Python code. So that’s when you fine tune an LLM on best practices of coding, so it becomes a coding agent or a coding LLM.

Fine tuning, I want to say, is task adaptation in which you’re basically telling the LLM this is how I want you to respond, and this is the context behind it. These are 10,000 examples or 100,000 examples of what greatness looks like. And once you give it all those examples, it can really customize to that specific use case and become that specialized LLM.

Fine Tuning vs RAG

Another example is that you work, let’s say you work for a pharma company, and you want the LLM to remember the vocabulary about your things, about the different acronyms you have and different products that you have built. That’s where you fine tune for.

I want to say vocabulary and not knowledge. Vocabulary refers to adding new words to the LLM. For knowledge, what you want to do is you want to connect to a RAG.

The Complete 6-Month AIPM Roadmap

Aakash: So we’ve given people a preview of the knowledge. We’ve given them the demo. If they want to go from here, what else is there in the six month roadmap to go from no experience to PM at OpenAI or Anthropic?

Hamza: So I’m gonna share a very basic architecture that I tell people. You have to learn by doing things. The best way is to keep building things. This is a basic roadmap:

- You need to know what LLMs are

- In this conversation, we looked into how we can build applications

- We discussed prompt engineering

- Then we looked into RAG

- Now you just have to follow this roadmap in order to build better products

You have to do it, you have to follow this theme over and over again till you get to a point where you have identified this is the roadmap or you’ve started to feel that I am making sense, and this is how you do it.

The Three Wave Approach to Building

One of the most important questions I get from people is: what should I build? Why do I need to—how do I go? And I tell them think of a three wave approach:

Wave 1: Efficiency and Time Savings The first wave is you want to build something that saves you time, efficiency gain, productivity gains. I’m not doing anything else. I’m just saving time to do something the exact same thing.

For example, Aakash, you meet a lot of people. You need maybe a summarizer which says this is the conversation you had with all the people today and these are the action steps that you need to do. That’s your time, cost, and efficiency.

Wave 2: Better Quality Output The second part is quality, better output. Hey, AI agent, can you take my video, slice and dice into different parts, and make a trailer out of it.

Wave 3: Something Completely New The third part is: hey AI, can you actually build an AI recording? As if this is the prototype of the script, I want you to build a script. I want you to have AI agents which act like humans and do the recording for me and then publish it on my YouTube channel.

And now it just makes sense. Instead of just following the roadmap—oh I need to learn tools—you saw within 30 minutes we were able to build Lovable, connected to N8N and have RAG working right in front of us. That’s what you need to do. You need to keep building things and see where your products fit in the business problem that you’re trying to solve for your customers.

Three Critical Questions

Does it solve a user problem? Does it solve an organization problem? Does it align with your business model? Three awesome questions to ask yourself as you guys go on this AIPM learning journey.

The Business of Teaching and Building AI

Aakash: So, I wanna talk to you a little bit here at the end of the episode about you. What is the business of Hamza? How big is the business of Hamza? You were formerly Google. You talked about poking fun about those are some serious golden handcuffs. What does the pie chart of your revenue look like today?

Hamza: So there are essentially two different things which I’m doing right now. I run a startup with the name of Traversal AI. We experimented with a lot of different tools. We had initial traction on them also, and we kept building. But where we’ve found a PMF is we have become this company that—our tagline is intelligence that runs your data.

Real World Impact: Manufacturing Example

Imagine manufacturers across the US. You have PNGs of the world, you have the Unilever, but then there are small and medium businesses also. We worked with a manufacturer who builds all the boxes for Amazon. They’re not small and medium, but they’re just like a mom and pop manufacturing that basically is producing cardboard boxes for Amazon. They had zero data scientists. They had zero insights on what and how much should they produce. They were reactive.

Which meant that, hey, we’re going to get a response from a request from a customer, we’re going to scramble and we’re gonna—so they were always just in time building.

What we did is that we built an army of agents for them that can process that information and give 20,000 SKUs at a daily level that this is the expected demand for them for the next 1 week, for the next 14 days, for the next 3 weeks. And it saves money for inventory optimization. It gives them better planning. It gives them saving money on raw material because they know what the demand’s going to be so they can purchase it beforehand.

We call this product Olive, and we’re working with Jack in the Box, we’re working with Home Depot for similar use cases. So that’s the company that we have.

The Power of Teaching

The second part of my life in ecosystem is teaching across various industries. Maven is a huge part of that ecosystem. In fact, one of the major reasons that I was able to quit Google was I started Maven. I started teaching on Maven and Maven gave me enough money or like I made enough money on Maven to get started with that.

I would say 10-15% of my entire year revenue comes from Maven and teaching different courses apart from Maven. And the remaining comes from working in my organization, working with our customers, subscription services for our products, and that’s how we’ve been building slowly our company and learning about actually becoming an AIPM myself.

Aakash: So you’re practicing these skill sets day in, day out. That’s so cool. Why keep the Maven course around if you have a successful software startup? It feels like most people would just do one or the other.

Why Teaching Matters

Hamza: You see, I’m gonna let you into a secret. I teach, yes, because I make the money, but I teach because I grow. I teach two courses:

Course 1: Foundation AIPM Course I teach a foundation course, which is an AIPM course, because that’s my learning on how I have learned to build empathy towards users and customers. And the crowd that we get over there are folks who really want to build something but they do not have the tools to build that, so I learn from their ideas. I learn from their thought process.

Course 2: Developer Course The second group is a developer course which I teach on what we are building in our company. We’re building in open space, so our entire product is in public. They’re almost like an extension, so we give them—we work with them in problems that we are facing and how we solve them. So working with those developers or engineering managers, senior engineering managers, they uplift my technical skill set.

There was once, we were sitting with friends and somebody asked me that Hamza, if money was not a problem, what would you do in your life? And my wife said, he will still teach.

I also teach at Stanford’s SPD. I also teach at UCLA. I also have a book. There are rewarding experiences that I gain, because otherwise, you will not talk to so many people. Like, I met somebody from Airbnb and he was one of my students in the course, and he was like, I think we should take what you have built for Airbnb to Airbnb and tell them, do this now.

Final Takeaways

This conversation with Hamza Farooq reveals the transformative power of AI product management and the realistic pathway to breaking into this high-paying field. With median salaries now matching software engineers in the Bay Area, AIPM represents one of the most lucrative career pivots available today.

The Essential Skills Stack

The core architecture every AIPM must understand is surprisingly simple: LLM (the brain), Backend (the orchestration layer using tools like N8N), and Frontend (the user interface using tools like Lovable). The beauty of modern no-code tools means you can build functional AI products in under 30 minutes without writing a single line of code.

Key Technologies to Master

RAG (Retrieval Augmented Generation) enables AI systems to search and understand your organization’s documents, solving the 80% unstructured data problem that plagues every company. Context engineering goes beyond simple prompt engineering by combining system prompts, user prompts, long-term memory, and RAG to create truly personalized AI experiences. Fine tuning allows you to specialize LLMs for specific tasks or vocabulary, making them domain experts rather than generalists.

The Three Wave Building Framework

Start with Wave 1: Efficiency gains that simply save time. Progress to Wave 2: Quality improvements that produce better outputs. Finally reach Wave 3: Novel capabilities that create entirely new possibilities. This framework helps you identify what to build at each stage of your AIPM journey.

The 6-Month Roadmap

The path from zero to AIPM is achievable in 6 months through consistent building. Learn the fundamentals of how LLMs work, master no-code tools like N8N and Lovable, understand RAG implementation, practice context engineering, and most importantly—build constantly. Each project should answer three critical questions: Does it solve a user problem? Does it solve an organizational problem? Does it align with your business model?

Real-World Applications

The episode’s live demonstrations prove these aren’t just theoretical concepts. From building an enhanced Airbnb search that understands natural language queries to connecting knowledge bases that can answer questions from thousands of documents, these are production-ready capabilities you can ship today.

For PMs looking to transition into AIPM roles at companies like OpenAI, Anthropic, or any enterprise embracing AI, the message is clear: the tools are mature, the demand is exploding, and the barrier to entry has never been lower. You don’t need a technical background—you need curiosity, willingness to experiment, and the discipline to keep building. The 6-month roadmap is real, the no-code tools work, and the career opportunities are waiting. The question isn’t whether AI product management is the future—it’s whether you’ll seize the opportunity before everyone else catches on.