If you want to break into product management or get promoted to a senior role, you have to master iteration. It's the engine that drives all successful product work, separating the top 1% of PMs from the rest. Simply put, iteration is a cycle: you build the smallest possible thing to test a high-stakes assumption, measure what happens, and use that data to decide what to do next.

The Modern PMs Playbook for Iteration

I like to think of it like a sculptor carving a statue from a block of stone. They don't just glue on new pieces of marble. Instead, they make a cut, step back, look at the whole piece, and then decide where to chip away next. Each action refines the form, bringing the final vision closer to reality. That’s iteration. It’s about shaping and refining what you have based on real-world feedback.

As someone who has hired product managers at every level, from APM to VP, I can tell you this: a deep understanding of iteration is non-negotiable at places like Meta, Google, and Netflix. When a PM who truly gets iteration can command a salary over $200,000, it's clear the market values this skill. Your ability to learn and adapt faster than the competition is your single greatest career asset.

For any PM, understanding iteration isn't just about process—it's about adopting a mindset of continuous improvement that fuels both product wins and your own career growth.

Iteration vs. Incremental vs. Pivot At a Glance

To really get what iteration is, you have to know what it isn't. I see a lot of junior PMs mix it up with "incremental" work or "pivots," and that confusion leads to bad strategy. They're related, but they serve totally different purposes.

Let's clear this up. Here’s a quick mental model to keep these concepts straight.

| Concept | Core Goal | Example | When to Use |

|---|---|---|---|

| Iteration | Refine and Improve. To make the same thing better based on feedback. | Changing the button color, text, and placement on a sign-up form to improve conversion rates. | When you have a working feature and want to optimize its performance or user experience. |

| Incremental | Build and Add. To deliver a product in functional pieces or chunks. | Releasing a text editor, then adding spell-check, and later adding formatting tools. | When building a large, complex product, delivering value to users in stages. |

| Pivot | Change Direction. A fundamental shift in product, strategy, or target market. | A photo-sharing app realizing its users love the filters and relaunching as a photo-editing tool. | When your core hypothesis is proven wrong and you need a new strategic direction to survive. |

Getting this distinction right is foundational. An iteration improves an existing piece, an increment adds a new piece, and a pivot changes the entire game plan.

To see how these ideas come to life, it helps to understand the core principles of iterative development, which is central to how modern teams build software. And if you want to zoom out and see the bigger picture, our guide on the agile product development process shows how it all fits together. This is the first step in the playbook for building great products and accelerating your PM career.

How Iteration Built the Modern Tech World

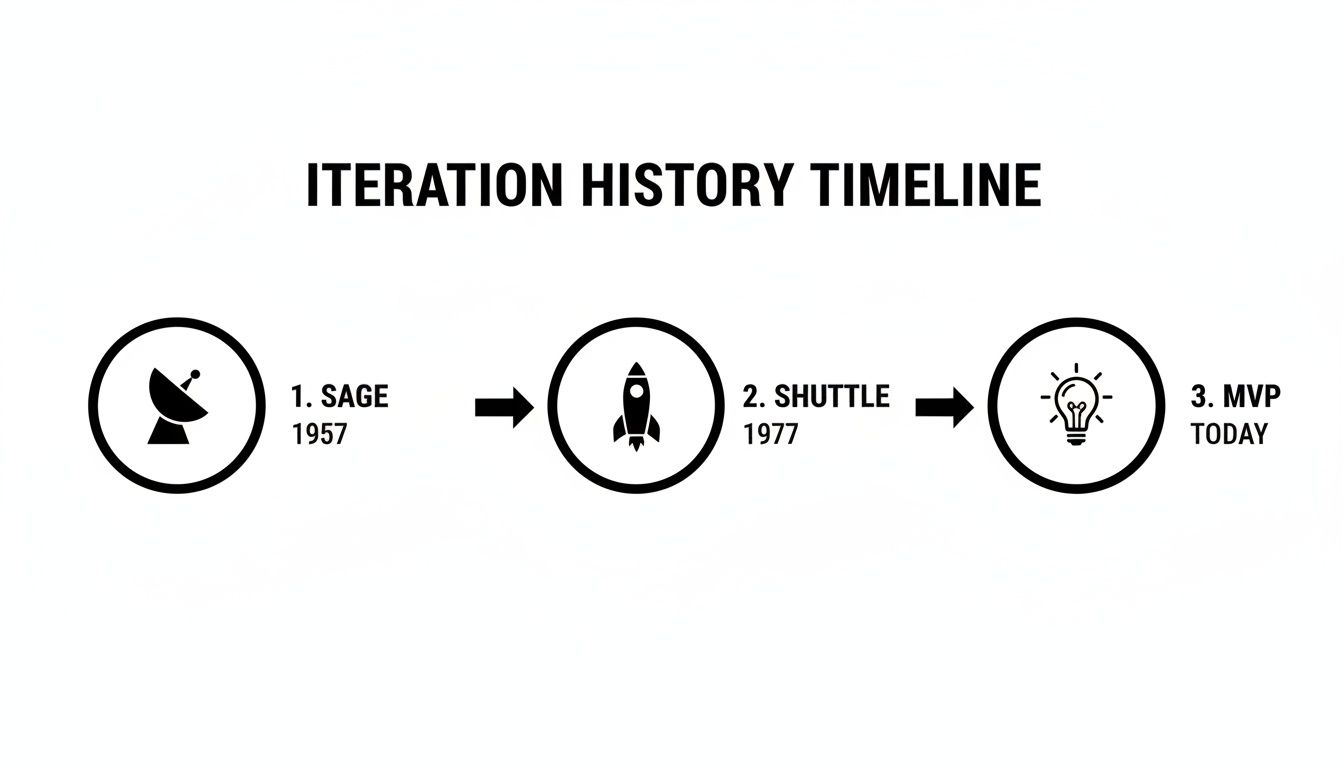

If you think ‘iteration’ is just some new-age startup buzzword, you’re missing the whole story. To truly master it, you need to understand its roots. This isn't a recent trend; it’s a battle-hardened principle born from some of the most complex engineering feats of the 20th century.

As a PM, knowing this history isn't just trivia. It’s a strategic asset. It gives you the weight of proven success when you advocate for this approach, especially when facing skepticism. The core ideas we now associate with iteration were being put into practice almost 50 years before the Agile Manifesto was even written. It was forged in environments where failure was catastrophic and requirements were guaranteed to shift under your feet.

From National Defense to Outer Space

One of the earliest documented cases was the SAGE air defense system back in 1957. Faced with building a mind-bogglingly complex system, the team broke the work into short, iterative cycles of one to six weeks. It was a radical strategy for the time, but it worked. They delivered every single component on time and under budget—a success that still evades plenty of projects today.

This proves that at its core, iteration is a framework for managing uncertainty.

Jump ahead to the Space Race. Between 1977 and 1980, a team at NASA built the space shuttle's primary avionics software. This team, including many veterans from the Mercury program, delivered the software through 17 distinct iterations over 31 months.

Why? A rigid, waterfall-style plan would have been a complete disaster. The shuttle's requirements were literally changing while they were building it. Instead, they used an iterative model where each cycle, averaging about eight weeks, was a time-boxed sprint of development and refinement driven by constant feedback. The result was a system so famously reliable that it powered dozens of missions without any major software failures. You can go deeper on the academic history of early iterative projects in this detailed paper.

The lesson from NASA is crystal clear: when the stakes are high and the path is foggy, rapid, repeated cycles of refinement are the only way to succeed. This is just as true for a PM launching a new feature today as it was for engineers launching a space shuttle back then.

Connecting History to Modern Product Strategy

These historical wins are the direct ancestors of the Minimum Viable Product (MVP) and the lean strategies that top product leaders swear by today. The principles are identical:

- De-risk Uncertainty: Don't build the whole thing and hope it's right. Build a small piece to learn something valuable.

- Incorporate Feedback: Use what you learn from one cycle to make the next one smarter, ensuring the final product actually solves a real-world problem.

- Deliver Value Sooner: Ship functional pieces of the puzzle early and often. This builds momentum and lets you course-correct before it's too late.

The modern growth playbooks that scaled companies like Stripe into product powerhouses are built on these exact feedback loops. This is why understanding what is iteration isn't just about process management. It's about connecting your daily work to a powerful, time-tested framework for building resilient, high-growth products.

Whether you're building a defense system, a space shuttle, or the next unicorn startup, the fundamental logic holds.

Running the Build Measure Learn Loop

The history of iteration gives us the "why," but the Build-Measure-Learn loop is all about the "how." Coined by Eric Ries in The Lean Startup, this is the operating system for effective iteration. As a PM, mastering this loop isn't just a good idea; it's the tactical process you'll run week in and week out to turn half-baked ideas into real customer value.

Think of it as the scientific method, but for product people. You start with a question (your hypothesis), run an experiment to get some data, and then analyze that data to find an answer. This simple, repeatable cycle is what separates the high-velocity teams from the ones who just build features and hope for the best.

This isn’t some new fad. The core idea—reducing uncertainty through rapid cycles of work—has been around for decades, from massive engineering projects to modern apps.

The throughline is clear: whether you're building a radar system in 1957 or a SaaS product today, the goal is always to learn as much as possible with the least amount of work.

Step 1 Build Your Smallest Experiment

The "Build" phase isn't about building a full-blown feature. It’s about building the smallest possible thing you can to test your riskiest assumption. This is your Minimum Viable Product (MVP), and its only job is to generate learning, not revenue.

A classic mistake I see junior PMs make is over-scoping the MVP. They ask, "what's the minimum we can build?" The real question is, "what's the absolute minimum we must build to learn something useful?"

Case Study: Dropbox’s Explainer Video MVP

Before writing a single line of the complex code needed for their file-syncing product, the Dropbox founders had one huge question: Would anyone even want this? Building the actual product would have taken months. Instead, their MVP was a simple, 3-minute explainer video.

- Hypothesis: Tech-savvy early adopters have a painful file-syncing problem and will sign up for a solution that "just works."

- The "Build": A screencast video showing how Dropbox would function, filled with inside jokes for their target audience on Digg.

- The Result: Their beta waitlist exploded from 5,000 to 75,000 people literally overnight. They had validated their core hypothesis without writing a shred of production code.

Step 2 Measure What Matters

Once your experiment is live, the "Measure" phase kicks in. This is where you collect the data to see if your hypothesis was on the money. It's absolutely crucial to define your success metrics before you launch. If you don't, you'll fall into the trap of cherry-picking data to support your own narrative.

You need a mix of two kinds of data:

- Quantitative Data (The "What"): This is the hard data. We're talking conversion rates, user engagement, click-through rates, and task completion times. Having solid A/B testing best practices is essential here to make sure your data is statistically sound.

- Qualitative Data (The "Why"): This is the human insight behind the numbers. It comes from user interviews, surveys, and feedback forms. Why did users drop off? What frustrated them? What did they love?

You can even use AI to get a head start here. For instance, feed user interview transcripts into a model with a prompt like: Analyze these 5 user interviews about our new onboarding flow. Identify the top 3 friction points and provide 5 direct quotes that illustrate user frustration.

Step 3 Learn and Decide

This is the most critical step of all. Data is useless if you don't learn from it. In this phase, you analyze both your quantitative and qualitative feedback to answer one simple question: Was our hypothesis correct?

The answer leads to a clear, disciplined decision.

Based on the data, you must make a choice: persevere, pivot, or kill.

- Persevere: The data backs you up. Your hypothesis was right, and you're on the right track. The next iteration should focus on optimizing what you built or adding the next logical piece.

- Pivot: Your core idea has legs, but your initial strategy was off. You need to make a major change—like targeting a new customer segment, changing the core feature, or even overhauling the pricing model.

- Kill: The data shows your hypothesis was fundamentally wrong. The idea isn't working, and throwing more resources at it won't fix it. It’s tough, but killing a bad idea is one of the most valuable things a PM can do. It frees up your team for a better one.

This disciplined cycle of Build-Measure-Learn is the engine that drives product growth. By running it effectively, you stop building what you think users want and start building what you know they need.

How Top PMs Plan Iteration Cadence and Scope

Great iteration is all about rhythm and focus, not just raw speed. This is one of those areas where senior PMs really pull away from the pack. They get that a product’s heartbeat is its iteration cadence—that regular, predictable cycle of building, shipping, and learning. Nailing this rhythm is absolutely critical for keeping your team energized, stakeholders confident, and the product on the right track.

The cadence you land on is a strategic decision, a trade-off. There’s no magic one-size-fits-all answer here. It all comes down to your product, your team, and what kind of uncertainty you’re trying to tackle.

Choosing Your Iteration Cadence

If you look inside top tech companies, you'll find they use a few different cycle lengths, each picked for a specific job.

One-Week Cycles: These are perfect for rapid-fire validation, especially in a product's early days. You use this when you need to test a critical assumption fast—like a new headline on your landing page or a tweak to your pricing. The point isn't to write flawless code; it's to get a quick signal from the market.

Two-Week Cycles: This is the industry standard for a reason. It's the sweet spot for most Scrum teams. You get enough time to build a small but meaningful piece of the product, but you keep the feedback loops nice and tight. Most of your feature enhancements and bug fixes will probably live in this cycle.

Four-Week (or Longer) Cycles: Save these for the big, complex, foundational stuff. Think major backend refactors or the very first version of a deep-tech feature where the engineering or design problems are genuinely hard. The big risk here is the long delay before you get feedback, so you have to be incredibly disciplined with your scope.

When top PMs map out cadence and scope, their project meetings are sharp and decisive. Using a solid project meeting notes template is a non-negotiable for making sure every decision and action item is nailed down. It stops you from falling into the trap of vague chats and gives everyone a clear path forward.

Scoping the Perfect Iteration

Once you’ve got your cadence, the next battle is scope. Honestly, this is where most teams stumble. An iteration stuffed with a bunch of disconnected tasks isn't a focused experiment; it's just a glorified to-do list. Your job as a PM is to be the relentless defender of focus for every single cycle.

A great iteration is defined by the one critical question it answers, not the number of tasks it completes. It's about clarity over quantity.

To get to that kind of clarity, run through a simple checklist before you lock in your scope:

- What is the single most important hypothesis we are testing? Always frame your goal as a question. For example, "Will adding social proof to the checkout page increase conversion by 5%?"

- What is the absolute minimum work needed for a clear signal? You have to resist the temptation to add "nice-to-haves." Be ruthless. Cut anything that doesn't directly help you validate the hypothesis.

- How will we measure success? Define the primary metric (like conversion rate or time-on-page) before a single line of code gets written.

- Is this a one-way or two-way door decision? A small, easily reversible UI change is a "two-way door" and needs less ceremony. A fundamental change to your data architecture is a "one-way door" and requires much more rigor.

For any PM looking to level up, mastering how you manage scope is essential. You can sharpen your skills by reviewing our detailed guide on sprint planning best practices.

AI and Data-Driven Planning

These days, AI tools can help you estimate scope and map out dependencies, making your planning way more precise. You can now feed a set of user stories into an AI model and ask it to flag potential overlaps, spot missing dependencies, or even estimate relative effort based on your team's past sprints. This doesn't replace your judgment, but it augments it, helping you catch risks much earlier in the game.

This data-backed approach just reinforces a truth we've known for decades: iterative models are simply better at adapting and managing costs, especially when requirements are fuzzy. This isn't just some modern Agile fad, either. A DoD analysis of 101 projects found that shorter, time-boxed iterations dramatically increase success rates by forcing teams to ship value early and often, which cuts down the risk of a total project meltdown. You can explore the full research on iterative model efficiency to see the data on how it consistently beats waterfall methods on both budget and scope control.

Real-World Iteration: How Spotify, Instagram, and OpenAI Do It

Theory is one thing, but the real learning happens when you see how the world's best product teams actually operate. I've hired PMs from these very companies, and I can tell you their success isn't some kind of magic. It's a relentless, almost obsessive, commitment to iteration that lets them solve tough problems and consistently outmaneuver everyone else.

Let’s pull back the curtain and see how they really do it.

How Spotify's Structure Enables Rapid Iteration

While the heading mentions Google, it's actually Spotify's organizational model that became legendary for enabling iteration at scale. Their structure of "Squads, Chapters, and Guilds" is designed specifically to create small, autonomous teams (Squads) that own a feature area from end to end.

This setup lets them iterate independently and quickly, without getting stuck in bureaucratic mud. A squad working on the "Discover Weekly" playlist can dream up its own experiments, measure the impact on user listening hours, and ship changes without needing a sign-off from three layers of management. It’s a structure that basically forces teams to live and breathe the Build-Measure-Learn loop.

How Instagram Iterated to Dominate Social Video

A textbook case study in brilliant iteration is how Instagram launched and evolved "Stories." When they first shipped it, the feature was a shameless, almost feature-for-feature copy of Snapchat Stories. It was a huge risk, but it was their MVP—a blunt instrument to test one core hypothesis: Will our users create and consume fleeting, vertical video content on this platform?

The answer was a deafening yes. But they didn't pop the champagne and stop there. That initial launch was just the starting pistol for a series of rapid-fire iterations.

- Iteration 1: Add Stickers & Polls. They saw high engagement but wanted more direct interaction. The team measured an immediate spike in daily active creators and the number of DMs sent in response to Stories.

- Iteration 2: Introduce AR Filters. Seeing how well playful features worked, they doubled down. This was a direct shot at Snapchat's most beloved feature, and they tracked success by measuring the creation and sharing of videos using the new filters.

- Iteration 3: Integrate "Close Friends." Qualitative feedback surfaced a desire for more private sharing. They measured the success of this by tracking the number of "Close Friends" lists created and the volume of private stories shared—a metric signaling a much deeper level of trust.

Each step wasn't just a random feature lobbed over the fence. It was a calculated experiment, driven by user data and a sharp eye on the competition, designed to move a specific metric. This is what masterful iteration looks like: a chain of small, smart steps that compound into total market dominance.

Iterating on AI The OpenAI Way

For AI Product Managers, the principles are identical, but the feedback loops can be incredibly powerful and far more complex. Picture yourself as a PM at OpenAI tasked with improving the ChatGPT interface. Your user data isn't just clicks and scrolls; it's the raw text of millions of conversations.

"We will make some good decisions and some missteps, but we will take feedback and try to fix the missteps very quickly. We plan to do our iteration on different approaches in Sora, but then apply it consistently across our products." – Sam Altman, CEO of OpenAI

This quote is the perfect summary of the iterative mindset needed for AI. Your "learning" phase is on steroids. You can use an AI model to sift through user prompts and spot patterns. For instance, are users constantly rephrasing their prompts in a certain way to get a better answer?

- Hypothesis: Users are struggling to figure out how to get the model to generate responses in a specific format (like a table).

- The "Build": You could add a small, contextual "tip" bubble that pops up when a user's prompt includes words like "compare" or "list in a table."

- The "Measure": You'd track the click-through rate on this new tip. But more importantly, you'd measure whether it leads to a higher success rate of users getting the tables they wanted in their very next prompt. You’re measuring actual task success.

By analyzing how people talk to the model, you can iterate on the UI to make it more intuitive and powerful. This approach turns raw user behavior into a direct input for the next development cycle, creating a product that feels like it's learning right alongside the user. These examples from Instagram and OpenAI give you a clear blueprint for applying iterative principles, whether you're working on a simple mobile feature or a complex AI model.

Common Iteration Pitfalls and How to Avoid Them

Knowing what an iteration is gets you to the starting line. But knowing the traps that lie ahead is what separates the great PMs from the rest. As someone who has hired and mentored product managers for years, I’ve seen the same mistakes derail promising careers and bring great products to a screeching halt.

These aren't just minor process hiccups. They’re fundamental misunderstandings that lead to wasted cycles, frustrated teams, and missed market windows. The good news? These pitfalls are entirely predictable and, more importantly, avoidable. Once you learn to spot them, you can steer your team clear, keeping your product on track and your stakeholders confident.

The Pitfall of Feature Creep

The most common trap, by far, is feature creep within a single iteration. You kick off a two-week sprint with a crystal-clear goal, but by day three, a stakeholder asks for “just one more small thing.” Before you know it, what started as a focused experiment has morphed into a bloated checklist. Your ability to get a clean signal from the work is gone.

To fight this, you have to become the ruthless guardian of your team's focus.

- Ask yourself: Are we adding new scope or changing requirements after this iteration has already started?

- The solution: Institute a strict "no-change" policy once an iteration is in flight. All new ideas and requests go straight into the backlog for the next planning session. This isn't about being difficult; it's about discipline. You can learn more about taming this beast by reading up on how to handle scope creep effectively.

Analysis Paralysis and Vague Hypotheses

Another career-killer is analysis paralysis. This is what happens when you're drowning in data but can't generate a single actionable insight. You run an A/B test, see a 0.5% lift, and then spend the next three weeks debating what it really means instead of just making a decision and moving on. This almost always stems from a failure to set a clear, falsifiable hypothesis from the get-go.

An iteration without a sharp hypothesis isn't an experiment; it's just busywork. You must be able to clearly state, "If we are right, we will see X metric move by Y%."

If you can't fill in those blanks, you aren't ready to build. Define what success looks like before a single line of code gets written.

The Shift to Smaller, Focused Cycles

The industry as a whole has learned these lessons the hard way. A huge study analyzing 40 years of software projects paints a very clear picture: after the year 2000, project sizes were halved and schedules were compressed dramatically.

Think about that. In the 1980s, the average project took a staggering 16 months. By the 2000s, iterative methods slashed that to just over 7 months. This wasn't because developers magically got twice as fast. It was because the industry finally realized that smaller, more focused iterations deliver better results, more efficiently. As you can see from the long-term trends in software data, disciplined, bite-sized cycles are a proven path to success.

Frequently Asked Questions About Iteration

As a PM, getting your head around the principles of iteration is a great start. But the real test comes when you have to apply them in the messy reality of daily work.

Here are some quick, no-fluff answers to the most common questions I hear from product managers trying to make iteration actually work for them.

How Do I Convince Leadership to Adopt Iteration?

Stop talking about process jargon. Start talking about risk and money. Frame iteration as a core business strategy, not a newfangled agile-thingy.

Propose a small pilot project to de-risk a much larger investment. You can literally say, "We have two options. We can spend six months and $500k building the feature we think is right. Or, we can spend one month and $50k to prove we're on the right track before committing the full budget."

When you use data to show how learning faster reduces project failure and keeps budgets in check, you're making a financial case that's hard to ignore.

What Is the Difference Between an Iteration and a Sprint?

This one trips a lot of people up, but it's pretty simple when you break it down.

Think of 'iteration' as the overarching philosophy—the whole Build-Measure-Learn loop. It's the "why." A 'sprint' is just a specific tool from the Scrum framework to get the work done. It's the "how."

A two-week sprint is the container for the work, but the goal of that work should always be to complete an iteration. You're trying to ship something, measure its impact, and learn something valuable that directly informs what you build in the next sprint.

In short, a sprint is a time-boxed vehicle for an iteration, but you can iterate without ever using the Scrum framework.

When Should You Not Use an Iterative Approach?

Iteration is a tool purpose-built for navigating uncertainty. It starts to become overkill when the problem and the solution are both crystal clear and there’s almost no risk in execution.

For example, if you're implementing a non-negotiable legal requirement—like a GDPR cookie banner with a fixed set of rules—there’s not much to learn. The same goes for building a simple, static website from a final, client-approved design.

If the requirements are truly stable and the technology is completely proven, a more direct, "waterfall" style build can actually be more efficient. But let's be honest, in modern product development, these situations are few and far between.

When to skip iteration:

- Low Uncertainty: The "what" and "how" are fully known and stable.

- Fixed Requirements: There is no room for feedback or change (e.g., legal compliance).

- Simple Execution: The project is straightforward with no technical or user experience risks.

How Is AI Changing Product Iteration for PMs?

AI is basically putting the entire iteration loop on steroids. It supercharges every single step.

For the "Build" phase, PMs are already using AI to generate first drafts of user stories or even spit out functional UI mockups. In the "Measure" phase, AI tools can tear through huge volumes of user feedback and behavioral data in minutes, surfacing patterns a human might take days to find.

And for "Learn," AI can synthesize all those findings and suggest data-backed hypotheses for your next cycle. For an AI-native product, the product itself is iterating and learning constantly. AI doesn't change the fundamental principles of what is iteration, but it's dramatically cranking up the speed and depth at which we can learn.

Ready to advance your product career with insights from a seasoned PM leader? Join the community at Aakash Gupta to get actionable advice from one of the largest newsletters and podcasts for product managers and growth leaders. Start learning for free today.